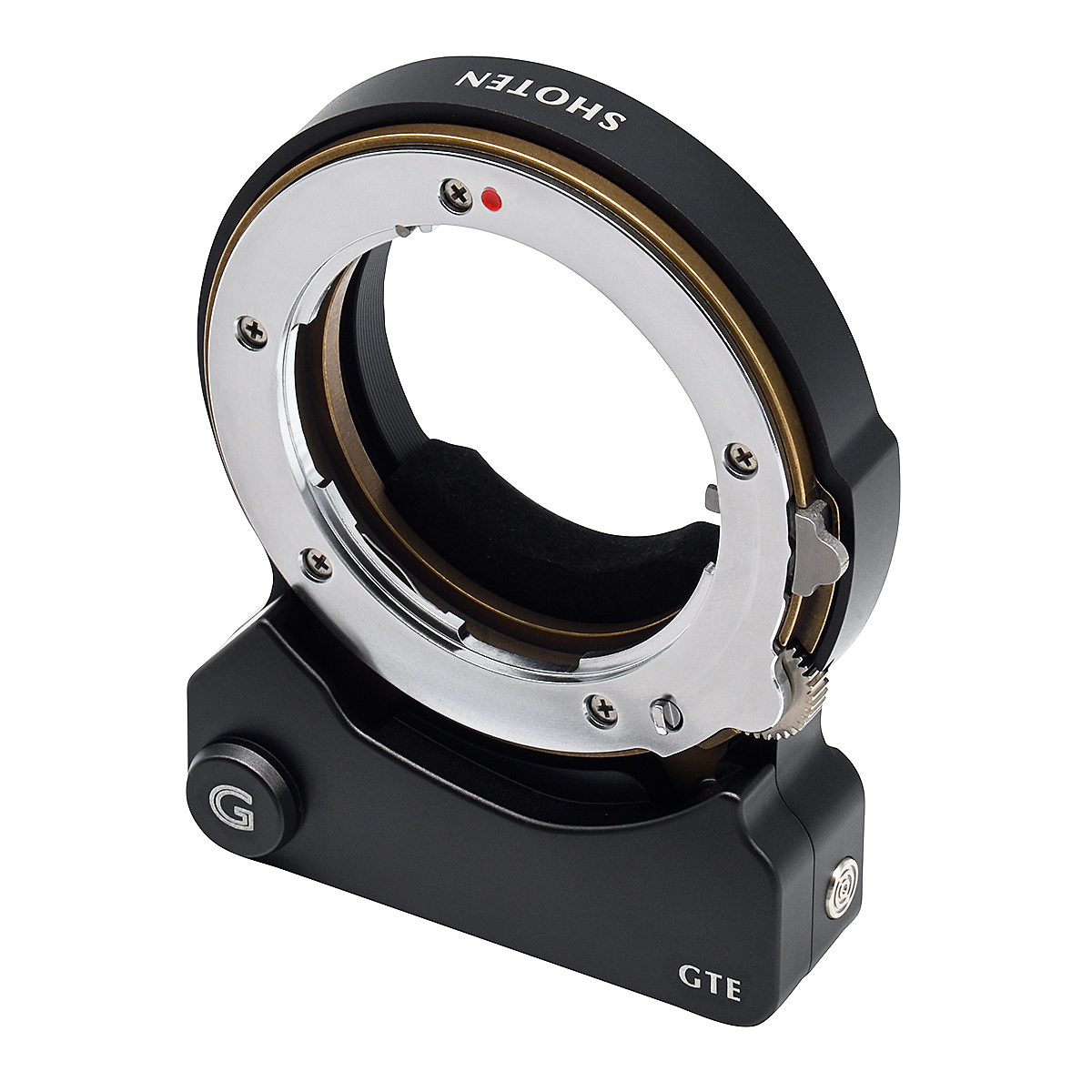

Review: Shoten GTE Contax G to Sony E Autofocus Adapter

For as long as I’ve used digital cameras, I’ve wanted a system in which I can freely and easily share lenses with a 35mm film camera, and have a similar features across both formats. There are tons of great manual focus options out there, but after becoming spoiled by autofocus, this became a lot more difficult. Is the latest Contax G to Sony E autofocus adapter from Shoten the answer?

About me

Hi, my name is Colin Baker. I’m a service provider network engineer in southwest Wisconsin.

Photo Galleries

look at the pictures